Abstract

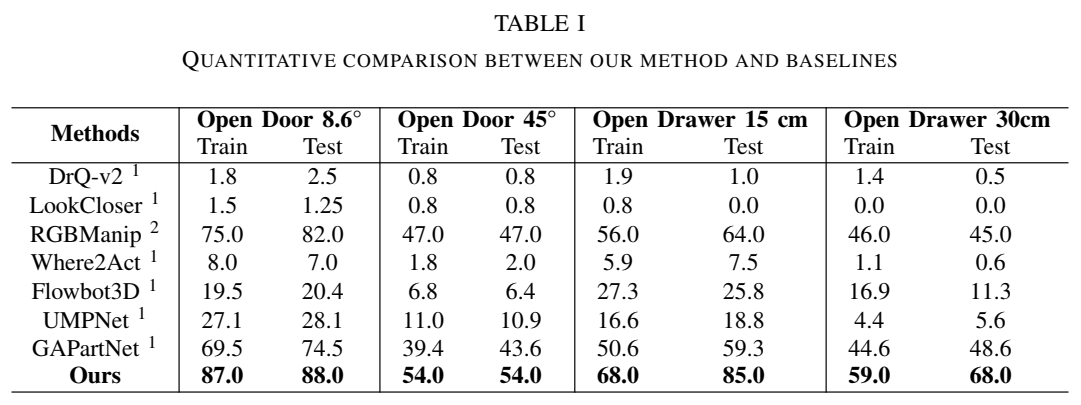

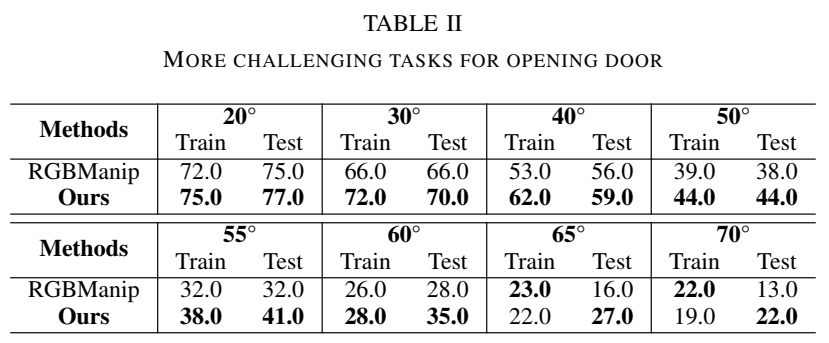

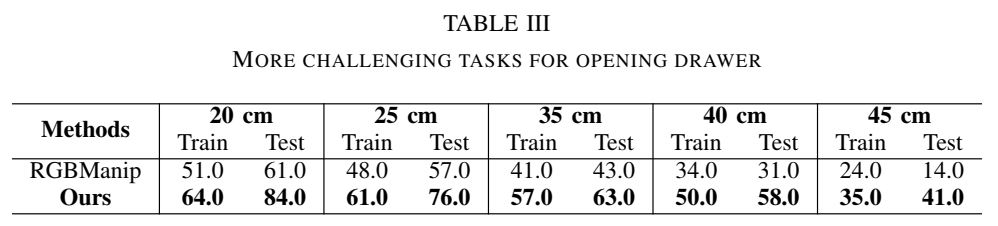

Articulated object manipulation requires precise object interaction, where the object's axis must be carefully considered. Previous research employed interactive perception for manipulating articulated objects, but typically, open-loop approaches often suffer from overlooking the interaction dynamics. To address this limitation, we present a closed-loop pipeline integrating interactive perception with online axis estimation from segmented 3D point clouds. Our method leverages any interactive perception technique as a foundation for interactive perception, inducing slight object movement to generate point cloud frames of the evolving dynamic scene. These point clouds are then segmented using Segment Anything Model 2 (SAM2), after which the moving part of the object is masked for accurate motion online axis estimation, guiding subsequent robotic actions. Our approach significantly enhances the precision and efficiency of manipulation tasks involving articulated objects. Experiments in simulated environments demonstrate that our method outperforms baseline approaches, especially in tasks that demand precise axis-based control.

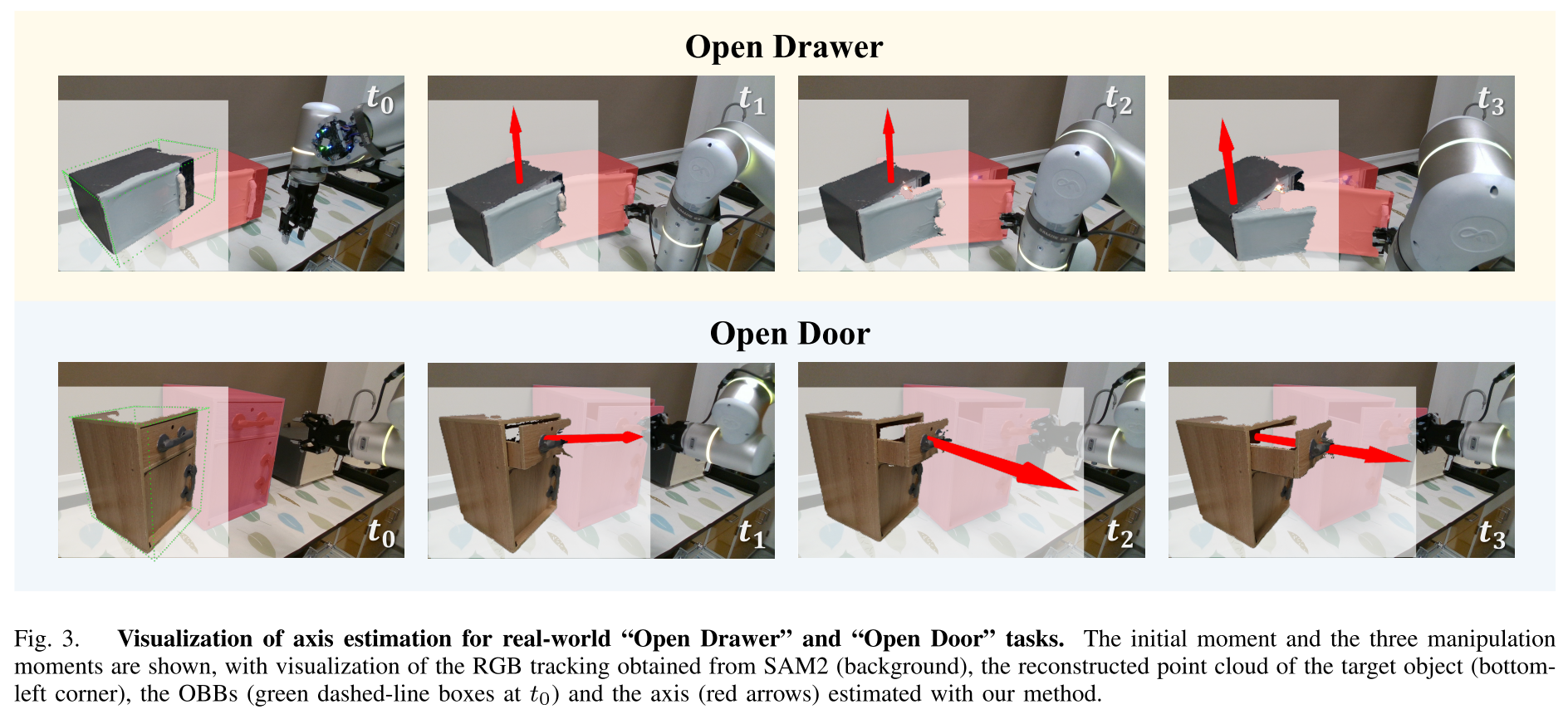

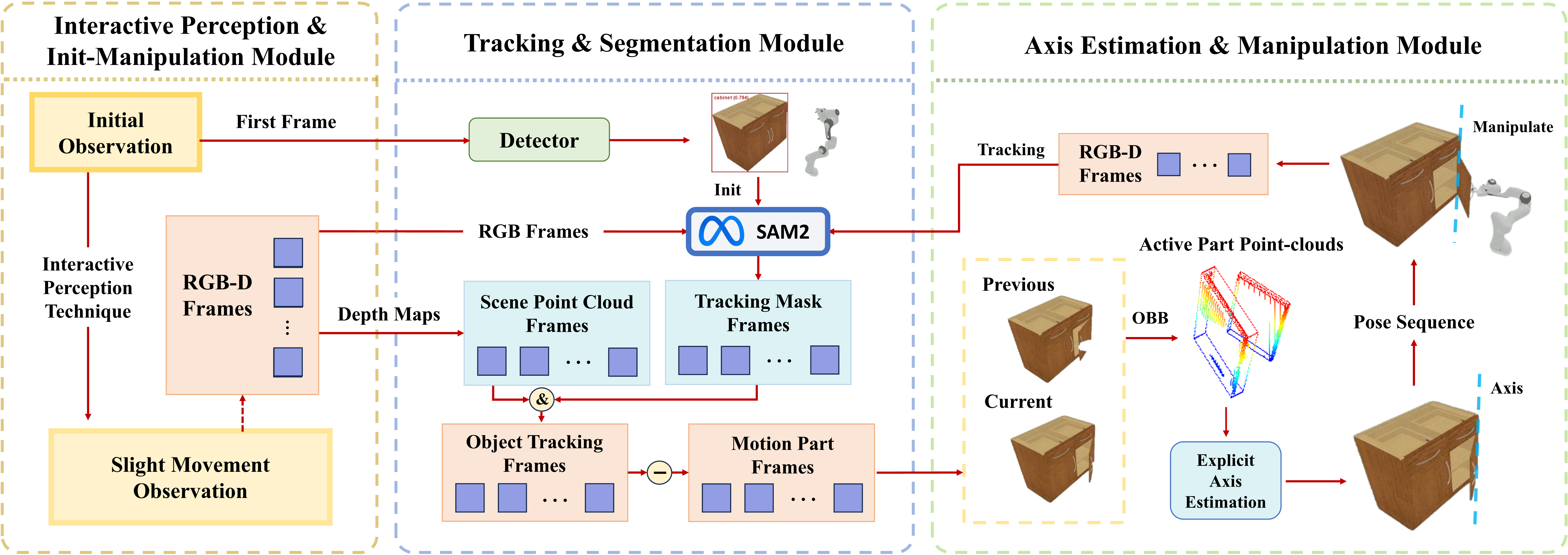

Our Pipeline

In our pipeline, an RGB-D camera captures the dynamic scene, which is induced by the slight movement from the Interactive Perception & Init-Manipulation Module. The captured scene is then processed by the Tracking & Segmentation Module, which tracks and segments the moving part of the articulated object at a 3D level. This segmented data is subsequently passed to the Axis Estimation & Manipulation Module. Here, the motion axis is explicitly calculated, providing informed guidance for the robot's manipulation policy.